Üzleti, technológiai és vezetői perspektíva a Zenitech-től.

Kiemelt tartalom

Exploring AI’s Next Frontier: New Beings AI eBook – Edition Two

AI in software development: the latest security analyses, integration of automated accessibility testing, and the acceleration of unit testing for robust web applications.

New Beings AI eBook – Edition One

Unlock the secrets of AI in software development, offering in-depth analysis on AI’s legal landscape, applications, and future.

AI Futures

AI Futures explores the exciting yet concerning potentials of AI, including AGI’s future, evolving development tools, and the transformation in business processes.

Összes tartalom

Modernising an ageing asset management system

Zenitech reinvents workspace design with a modern asset management system. From conference trial to business-wide implementation, a game-changing project.

Trunk based development: a journey to the present

Explore the transformative journey of Trunk Based Development in software engineering, a method that revolutionises integration and team efficiency.

Balancing AI-powered personalisation and security

Are we entering a new era where artificial intelligence (AI) not only understands our preferences but also safeguards our digital identities? In …

International Women’s Day Zenitech Interview

This International Women's Day Zenitech's Annamária Vass shares her experiences and insights on women's empowerment in technology.

Creating a personalised job marketplace for increased satisfaction

Explore Zenitech's innovative approach to a personalised job marketplace, enhancing user satisfaction and efficiency in the gig economy.

Enhancing customer loyalty through remote design sprints

Zenitech reinvents workspace design with a modern asset management system. From conference trial to business-wide implementation, a game-changing project.

Coaching other Product Managers

Gain expert insights on coaching and mentoring product managers with Kay Sogo. Explore leadership skills and personal growth strategies.

Zenitech achieves AWS partner status

Zenitech joins AWS Select Tier, enhancing cloud solutions with certified expertise. A milestone in tech innovation and customer service.

The joys of a geo-mapping service integration

Unravel the complexities of integrating geo-mapping services in software development. Insights for navigating challenges and achieving efficient integration.

Exploring AI’s Next Frontier: New Beings AI eBook – Edition Two

AI in software development: the latest security analyses, integration of automated accessibility testing, and the acceleration of unit testing for robust web applications.

A true story about a microservice and its performance issues

Technological trends in recent years have increasingly moved towards the development of services that are as small, fast, and resource-efficient as possible. …

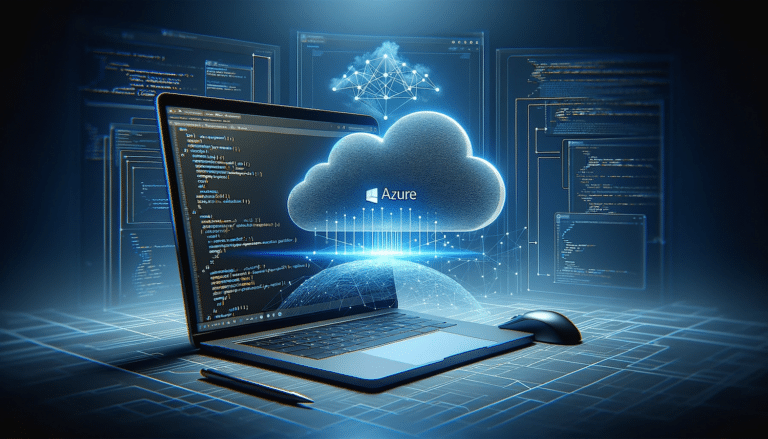

A deep-dive into Single Page Application hosting in Azure

Discover effective methods for hosting SPAs in Azure. Uncover advantages, challenges, and solutions tailored for Azure.

Integrating .NET applications with Keycloak

Unveil the secrets of .NET and Keycloak integration: Client logins, backend authentication, and REST API usage made easy.

Origami and Zenitech shortlisted for Development Team of the Year award

Origami & Zenitech earn finalist spot for Development Team of the Year, innovating the UK's energy tech sector.

Identity and user management in modern applications with Keycloak

Explore Keycloak for superior user management in apps. Secure, efficient, and versatile IAM integration.

The art of DevOps

Delve into the art of DevOps—beyond tools to culture, communication and creativity, for a unified, value-driven approach to software development.

Tips and tricks for a happy (or at least happier) life in Agile

Dive into actionable tips to refine your Agile practices, foster a feedback-rich environment, and boost team collaboration for a happier Agile experience.

New Beings AI eBook – Edition One

Unlock the secrets of AI in software development, offering in-depth analysis on AI's legal landscape, applications, and future.

Zenitech completes brand integration of AutSoft

Zenitech finalises integration of Budapest's AutSoft, unlocking a synergy of tech expertise & innovations across Europe.

A Zenitech 2023-ban is az Országos IT Megmérettetés egyik szponzora

A Zenitech idén is örömmel támogatja a 2023-as Országos IT Megmérettetést, amely immár hetedik éve Magyarország legnagyobb online informatikai versenye. A Zenitech …

Benefits and challenges of end-to-end testing at Zenitech – a deep dive into the e2es testing world

Discover Zenitech's insights on end-to-end (e2es) testing. Learn the pros, cons, and techniques for a robust software testing approach.